QA Chatbot

A QA chatbot is a type of chatbot designed to answer questions and provide information to users. It uses natural language processing (NLP) and machine learning algorithms to understand and respond to user queries. QA chatbots can be integrated into websites, messaging platforms, and mobile applications to provide 24/7 customer support and answer frequently asked questions.

Key Components

- Natural Language Processing

- Dialog Management

- Intent Recognition

- Integration

- Analytics

- User Interface

Natural Language Processing

- This component is responsible for understanding and interpreting the user's input. NLP is a subset of AI that deals with analyzing, understanding, and generating human language.

Dialog Management

- This component manages the conversation flow between the chatbot and the user. It decides what the chatbot should say next based on the user's input and the chatbot's pre-programmed responses.

Intent Recognition

- Intent recognition is the process of identifying the user's intent based on their input. The chatbot uses intent recognition to determine what the user wants to accomplish and respond appropriately.

Integration

- Chatbots can be integrated with other systems and platforms such as CRM, marketing automation tools, and social media platforms. This allows the chatbot to access relevant data and provide personalized responses to users.

Analytics

- Analytics is a key component of a chatbot solution, which allows you to track user behavior and measure the effectiveness of your chatbot. This can help you identify areas for improvement and optimize the chatbot's performance.

User Interface

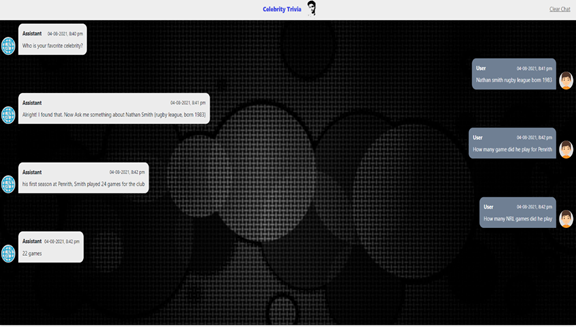

- The chatbot's user interface (UI) is the visual and interactive aspect of the chatbot that the user interacts with. The UI can be in the form of a text-based chat interface or a voice-based assistant.

Why Use QA Chatbots?

01. Improved customer service

QA chatbots provide 24/7 support, answering customer queries and providing information instantly, improving the overall customer experience.

02. Increased efficiency

QA chatbots automate repetitive tasks and handle simple queries, freeing up human support agents to focus on more complex issues, increasing efficiency and reducing response times.

03. Cost savings

By automating tasks, QA chatbots can reduce labor costs and improve the overall efficiency of customer service operations.

04. Personalization

QA chatbots can be customized to provide a personalized experience for customers, improving customer satisfaction and loyalty.

05. Scalability

QA chatbots can handle a large volume of customer queries simultaneously, providing a scalable solution for businesses.

Benefits

High Accuracy

BERT (Bidirectional Encoder Representations from Transformers) is a powerful pre-trained language model that has achieved state-of-the-art performance on several natural language processing (NLP) tasks. The Q/A BERT model has been fine-tuned on Q/A tasks to answer questions with high accuracy, making it one of the most reliable models for Q/A tasks.

Flexibility

The Q/A BERT model can be fine-tuned on different types of Q/A tasks, such as open-domain Q/A, factoid Q/A, and conversational Q/A, making it a flexible model for a wide range of use cases.

Contextual Understanding

: BERT is a context-aware model, which means it can understand the context of a sentence and the relationship between words in a sentence. This contextual understanding helps the Q/A BERT model to answer questions accurately by considering the context of the question.

Efficient

The Q/A BERT model is highly efficient because it can quickly process large volumes of text and provide answers to questions in real-time. This efficiency makes the model ideal for use in applications that require quick and accurate answers to questions.

Related Insights

QA Bot — Applications:

Question — Answering System has some wide use cases. Here we are mentioning some of those.

Finding Answers in Customer Q&A and Reviews

In different E-Commerce sites (E.g. — Amazon) there are different reviews for different products. We can think of a QA system that can grasp all the information from the user reviews and make it feasible for other new users who want to buy a new product. As any kind of relevant information can be delivered by the QA system to the new user.

Making Advisor Bots

For the sake of simplicity, we can make an Open Domain Question Answering bot that can be aware of the trending things available in Wikipedia and suggest the users accordingly about that. (E.g. — Trip Advisor Bot)

Reading Comprehension as a Question Answering System

One of the simplest forms of QA systems is machine reading comprehension (MRC) when the task is to find a relatively short answer to a question in an unstructured text. (E.g. – Finding the answer to the question from an unseen passage)

QA System — Libraries/Tools:

Bidirectional Encoder Representations from Transformer (BERT) model has the property of capturing the information from both sides from the right as well as from left which makes it more susceptible to errors during guessing the meaning of particular words, it also provides a particular property of autocompleting which we generally see during searching something on google. In the case of the BERT base, the number of layers is 12 and in BERT large it’s 24. So, we are actually feeding the tokenized vector into these layers after getting output. We again feed it to the next layer, this way we are able to get the output.

GPT-2 is a large transformer-based language model trained using the simple task of predicting the next word in 40GB of high-quality text from the internet. This simple objective proves sufficient to train the model to learn a variety of tasks due to the diversity of the dataset.

FastAPIis a modern, fast (high-performance), web framework for building APIs with Python 3.6+ based on standard Python-type hints. The key features are: Fast: Very high performance, on par with NodeJS and Go (thanks to Starlette and Pydantic). One of the fastest Python frameworks available. It is basically used to make an endpoint API that is accessible to all the users.

Process Explanation:

1. First, we need to define our model architecture. In our case, we have used Bert Large Uncased model.

2. In the next step we will train the model on SQUAD 1.1 dataset and validated our model on the squad validation dataset.

3. As we are building one open-domain question-answering bot so we are using the Wikipedia library to extract all necessary information for a particular existence. Then we are passing that Wikipedia page to our model so that our model can answer questions related to that searched existence.

4. We have also built one closed domain question-answering system using the CDQA library where we have used the same Bert model but here, we are getting the information related to our searched topic not from Wikipedia but from our own given pdf file. So here user needs to give information to the model from there the model will memorize and can return answers to the questions.

5. Next, we will create an API to make the bot available to the end-users so that they can test it.

QA Bot Demo (Sample Screenshots):